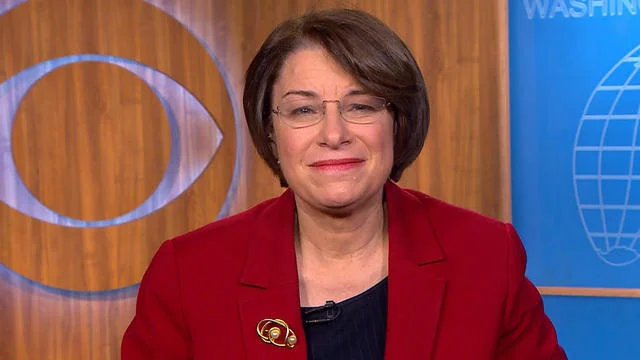

Minnesota Senator Amy Klobuchar is pressing Congress to act on new legislation regulating deepfake technology after a manipulated video of her circulated widely online. The video, which falsely depicted her making offensive remarks about actress Sydney Sweeney and members of the Democratic Party, has become a catalyst in her campaign for stronger protections against AI-generated misinformation. In a New York Times opinion piece, Klobuchar described the personal and political consequences of the incident while outlining her support for new laws.

A Senator Targeted by AI Manipulation

The altered video originated from footage of a Senate Judiciary subcommittee hearing on data privacy, digitally modified to insert crude language and disparaging comments. Although Klobuchar recognized immediately that the video was fabricated, she noted that it had already been viewed over a million times on social media platform X. She warned that its realistic appearance made it easy for some viewers to believe the content was authentic.

In her op-ed, Klobuchar expressed frustration with how the platform handled her complaint. She requested that the video either be removed or labeled as AI-generated, but said the company declined to take action. Instead, she was advised to add a Community Note clarifying the video’s origin, a measure she criticized as inadequate. “It was using my likeness to stoke controversy where it did not exist,” she wrote, adding that the clip had her “saying vile things” that undermined trust in public debate.

The controversy reflects broader concerns about how digital platforms respond to manipulated media. While X maintains policies against inauthentic content that may mislead the public, enforcement remains uneven. Critics argue that without stronger safeguards, deepfake technology could be exploited for political disinformation campaigns at scale.

Legislative Efforts and the No Fakes Act

To address these risks, Klobuchar is promoting the No Fakes Act, a bipartisan bill introduced in 2023 with support from Senators Chris Coons, Thom Tillis, and Marsha Blackburn. The proposal, whose full name is the Nurture Originals, Foster Art, and Keep Entertainment Safe Act, seeks to give individuals the right to demand removal of AI-generated replicas of their voice or likeness. The bill includes exemptions for parody, satire, and commentary, acknowledging the balance between regulation and free expression.

Klobuchar argues that the legislation is urgently needed to protect both public figures and private citizens. She emphasized that in the absence of legal recourse, individuals must rely on platforms’ discretionary policies, which often fail to act consistently or swiftly. By codifying rules into law, she contends, Congress could provide clear standards for what constitutes unlawful use of deepfake technology.

The proposal has drawn attention from across the political spectrum, reflecting growing bipartisan concern about the dangers of manipulated media. With elections approaching, lawmakers are increasingly aware of the potential for deepfakes to spread false narratives, damage reputations, and erode public trust. Supporters of the bill argue that establishing baseline protections is essential to maintaining the integrity of political and social discourse.

Criticism and Ongoing Debate

Despite its bipartisan backing, the No Fakes Act has also faced criticism from digital rights advocates. The Electronic Frontier Foundation (EFF), for example, has warned that the bill could unintentionally create what it calls “a new censorship infrastructure.” While the law provides carve-outs for satire and commentary, the organization notes that proving those exceptions in court could be financially burdensome for creators and smaller organizations.

The debate highlights the tension between combating misinformation and preserving freedom of expression. Supporters believe the law strikes an appropriate balance by allowing legitimate commentary while restricting harmful impersonations. Opponents caution that poorly designed regulations could suppress lawful speech and create unintended consequences for online content.

Meanwhile, Klobuchar’s experience underscores the difficulty of combating misinformation once it spreads. Although the senator’s op-ed brought attention to the deepfake and helped frame the legislative debate, it also amplified awareness of the video itself, which continues to circulate on social media. The incident illustrates both the urgency of addressing deepfakes and the challenges of doing so in a digital environment where content spreads rapidly and is difficult to contain.